|

Searching duplicate files by MD5 hash is one of the new feature in NoClone since 2011 (V5.

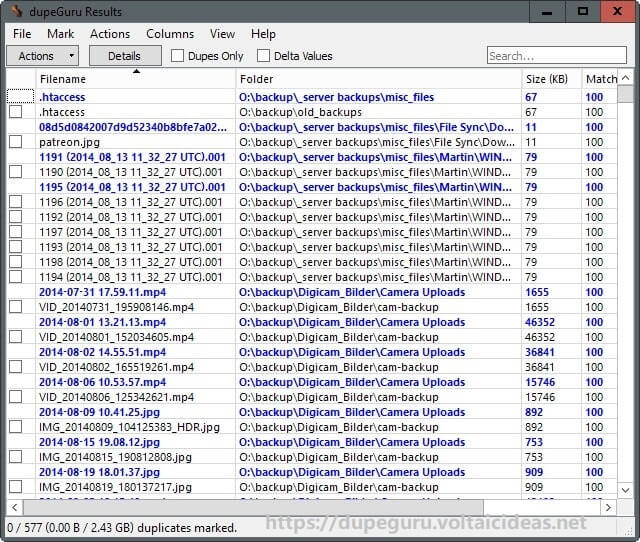

Before storing the value, it first checks whether it is already there - if it is it means it found a duplicate and it sets the duplicates flag and then it breaks out of the cycle. Now you can find duplicate files by MD5 hash instead of true byte-by-byte comparison to uncover more suspected duplicate files.MD5 hash is the unique code of one files, This feature is powerful to find out complete duplicate files. You can take FreeCommander anywhere – just copy the installation directory on a CD or USB-Stick – and you can evenwork with this program on a foreign computer. The script reads line by line from the INPUTFILE and stores each line in the associative array a as the key and sets the string nonempty as value. The md5sum Command in Linux MD5, short for Message-Digest algorithm 5, is a cryptographic hashing algorithm. Here you can find all the necessary functions to manage your data stock. I have a folder with duplicate (by md5sum (md5 on a Mac)) files, and I want to have a cron job scheduled to remove any found. The program helps you with daily work in Windows. file/duplicate search, filtering, MD5 calc / verfiy, folder compare. Firewall Log Analyzer for XP Creating COM objects without a need of DLL's UPnP support in AU3 Crystal Reports Viewer PDFCreator in AutoIT. Posted Decem(edited) XStandard - MD5 Com Object. By ptrex, Decemin AutoIt Example Scripts. awk -F'|' '' # save in an array named a, index=the 1st column (md5), value is the whole line.FreeCommander is an easy-to-use alternative to the standard windows file manager. Video Duplicate Finder is a cross-platform software (Windows and Linux) to find. 1 Its pretty simple: if 'file' 'f' & 'md' 'm' then echo 'Files file and f are duplicates. Duplicate Files Finder - MD5 Hash CheckSum. For this article I wrote a quick bash script to dump all potential duplicate file names. This long string is called a hash and it uniquely identifies. to group them by same MD5 hashes to find potential duplicate files. It is something like nuclear head in text processing. The above command will generate a long and seemingly random string of characters for both files. There are a lot of ready-to-use programs that combine many methods of finding duplicate files like checking the file size and MD5 signatures. You don't really need loop or two loops if you decide to solve it with awk. I have had trouble finding any imformation on how to write a bash script to find duplicate images files in a directory and remove then. Java Regex 2 - Duplicate Words Hackerrank Solution tip codesagar. I want to remove duplicate sequences based on the nucleotide sequence, rather than ID.HOW to doThankyou to all fasta sequence duplicates 65k views. However when I run the script to see if I have duplicate files, file1 fines file1 and so it thinks they are duplicaes even though they are the same file. Grep is a Linux / Unix command-line tool used to search for a string of characters in.

The first section is the md5sum of a file and the second chunk is the filename. I have a double loop that opens a files and uses awk to take the first section and the second section of each line.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed